TL;DR –

- This blog is designed for students of electronics, electrical engineering, RF, and communication systems, helping them understand why antennas fail and how SWR measurement prevents those failures.

- Antennas often underperform or fail not because of poor design, but due to lack of proper SWR measurement, which leads to power reflection and mismatch issues.

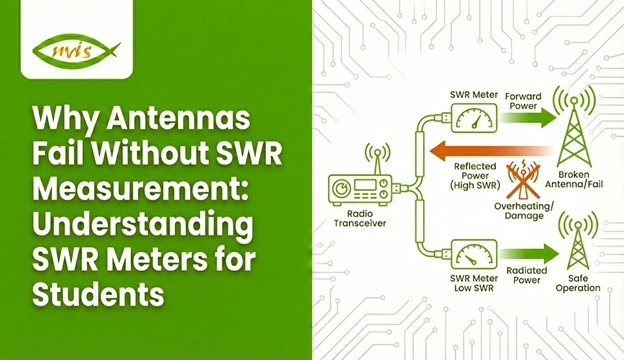

- An SWR meter measures forward and reflected power to indicate how well an antenna is matched to the transmitter and transmission line.

- High SWR causes signal loss, inconsistent results, and potential transmitter damage, making experiments unreliable and unsafe.

- Learning SWR measurement early helps students build strong RF fundamentals, improve antenna tuning, and develop professional troubleshooting and measurement skills.

Antennas are usually presented to students as simple elements such as metal rods, traces, or wires that transmit and receive signals. Theoretically, they appear simple. Practically, however, one of the most vulnerable components of any RF or wireless system is an antenna. Poor signal strength, limited range, unstable communication, overheated transmitters, or even total system failure are problems students often encounter, even when using a supposedly correct antenna design.In most cases, the cause of the problem is not faulty hardware or incorrect operating frequency. The actual issue is the absence of proper SWR measurement.

The Standing Wave Ratio (SWR) is a basic RF parameter that is a direct indication of how well an antenna fits its transmission line and transmitter. One of the most frequent and often most costly errors in student laboratories and early engineering projects is ignoring SWR. It is at this point that the SWR meter proves necessary.

This blog explains why antennas fail without SWR measurement, how an SWR meter works, and why learning SWR measurement early is essential when developing effective and reliable antenna systems.

Related Blogs

- What Is a Data Acquisition System and How Does It Work?

- How Analog and Digital Multimeters Work

- Why Embedded Systems Labs Are Essential for Future-Ready Engineers

What Is an SWR Meter?

The SWR meter is a specialized RF meter that is aimed at measuring the standing wave ratio in a transmission system. It does this by comparing:

- Forward power (power between transmitter and antenna)

- Reflected power (power being reflected off the antenna)

The SWR meter measures and indicates the SWR of the system by examining the ratio of these two numbers.

An SWR meter is one of the most useful learning tools in RF engineering because it bridges theoretical concepts with real-world measurements.

Understanding SWR Meters for Students

The SWR meter is a very significant but frequently misinterpreted device to students who are first entering the field of RF and wireless communication. Although antennas, transmitters and receivers often receive the greatest focus, the SWR meter is a vitally important (often behind-the-scenes) factor that ensures all these elements operate properly and safely to achieve effective coordination.

In its simplest form an SWR meter is utilized to carry out SWR measurement, which informs us of the suitability of an antenna to the transmission line and transmitter. An excellent match permits the antenna to radiate as much power as possible whereas poor match reflects the power back to the source. These considerations are not observable physically, yet their consequences such as loss of signal, overheating and erratic performance are very noticeable.

How does an SWR Meter Work?

An SWR meter is typically inserted inline between the transmitter and the antenna. Inside the meter, directional couplers sample both forward and reflected RF energy.

The meter then:

- Measures forward power

- Measures reflected power

- Computes the SWR value

- Displays the result using an analog scale or digital readout

This process allows students to instantly see how antenna changes affect system performance.

Why Antennas Fail Without SWR Measurement

1. Power Reflection and Signal Loss

With a high SWR, not much power is transferred into the antenna. The system also dissipates energy in reflections instead of radiating energy. This causes weak signals, reduced range, and unreliable communication—issues that are particularly evident in student projects.

2. Transmitter Stress and Damage

Power reflected from the antenna travels back to the transmitter output stage. Even though many modern transmitters include protection circuits, repeated exposure to high SWR can still cause damage.

- Excessive heat in the output transistor

- Shortened transmitter lifespan

- Automatic power reduction or shutdown

Without SWR measurement, students may unknowingly operate equipment under unsafe conditions.

3. Inconsistent Experimental Results

High SWR can bring about unpredictability. Even two similar experiments can yield different results due to the mere fact that the antenna system is unstable. This makes learning frustrating and experimental conclusions unreliable.

4. Misdiagnosis of Problems

Students often blame system failures on cables, radios, or software. As a matter of fact, many problems are actually caused by antenna mismatch. The measurement of SWR is fast in detecting the root cause, which is the antenna system.

How SWR Measurement Improves Antenna Performance

SWR measurement allows students to:

- Tune antenna length accurately

- Optimize antenna placement

- Detect faulty connectors or damaged cables

- Validate theoretical antenna designs

- Achieve consistent, repeatable results

By adjusting the antenna while monitoring SWR, students gain direct insight into RF behavior—an experience no simulation alone can provide.

Types of SWR Meters Students Should Know

Analog SWR Meters

Analog SWR meters use a printed scale and a moving needle.They are widely used in teaching laboratories because they display SWR behavior graphically and are easy to interpret.

Digital SWR Meters

Digital SWR meters have numeric displays, better precision and, in many cases, additional parameters including power level and frequency. They are suited to the more sophisticated labs and more contemporary RF work.

Integrated SWR Measurement

A few current radios and RF modules have built-in SWR measurement. Although convenient, standalone SWR meters are more valuable to learn due to the exposure of the measurement process.

Basic SWR Measurement Procedure for Students

- Connect the SWR meter between the transmitter and antenna

- Set the transmitter to the desired frequency

- Measure forward power

- Measure reflected power

- Read the SWR value

- Adjust the antenna and repeat

Why Students Should Learn SWR Measurement Early

Mastering SWR measurement builds:

- Strong RF fundamentals

- Measurement discipline

- Troubleshooting confidence

- Professional engineering habits

Engineers who understand SWR stand out because they design systems that work reliably beyond simulations.

The Educational Value of an SWR Meter

An SWR meter provides instant cause and effect feedback, unlike many instruments. Learners are able to observe the increase in performance in real time when they tune up an antenna. This is an experience-based learning that speeds the learning process, and reinforces fundamental RF concepts.

Conclusion: Reliable Antennas Begin with SWR Measurement

Antennas rarely fail due to theory; they fail due to poor matching and the absence of proper measurement. The measurement of SWR is not optional, it is fundamental. In its absence, antennas perform poorly, transmitters are stressed, and experimental results become unreliable.

The SWR meter, in addition to being a test instrument, is also a learning companion to all students who deal with RF systems. With knowledge and use of SWR measurement, students no longer work through trial and error but they are practicing real engineering.

For anyone serious about antennas and wireless communication, learning how to measure SWR is not only advisable but essential.

FAQs

SWR (Standing Wave Ratio) shows how well an antenna is matched to the transmitter. A low SWR means power is going to the antenna properly, while a high SWR means power is being reflected back.

Without SWR measurement, antenna mismatch goes unnoticed. This causes weak signals, poor range, and can even damage the transmitter over time.

An SWR meter measures forward and reflected power in an RF system and shows how efficiently the antenna is working.

An SWR close to 1:1 is best. For student projects, an SWR below 2:1 is generally safe and acceptable.

Yes. An SWR meter helps students tune antennas correctly, avoid equipment damage, and understand real-world RF behavior better than theory alone.